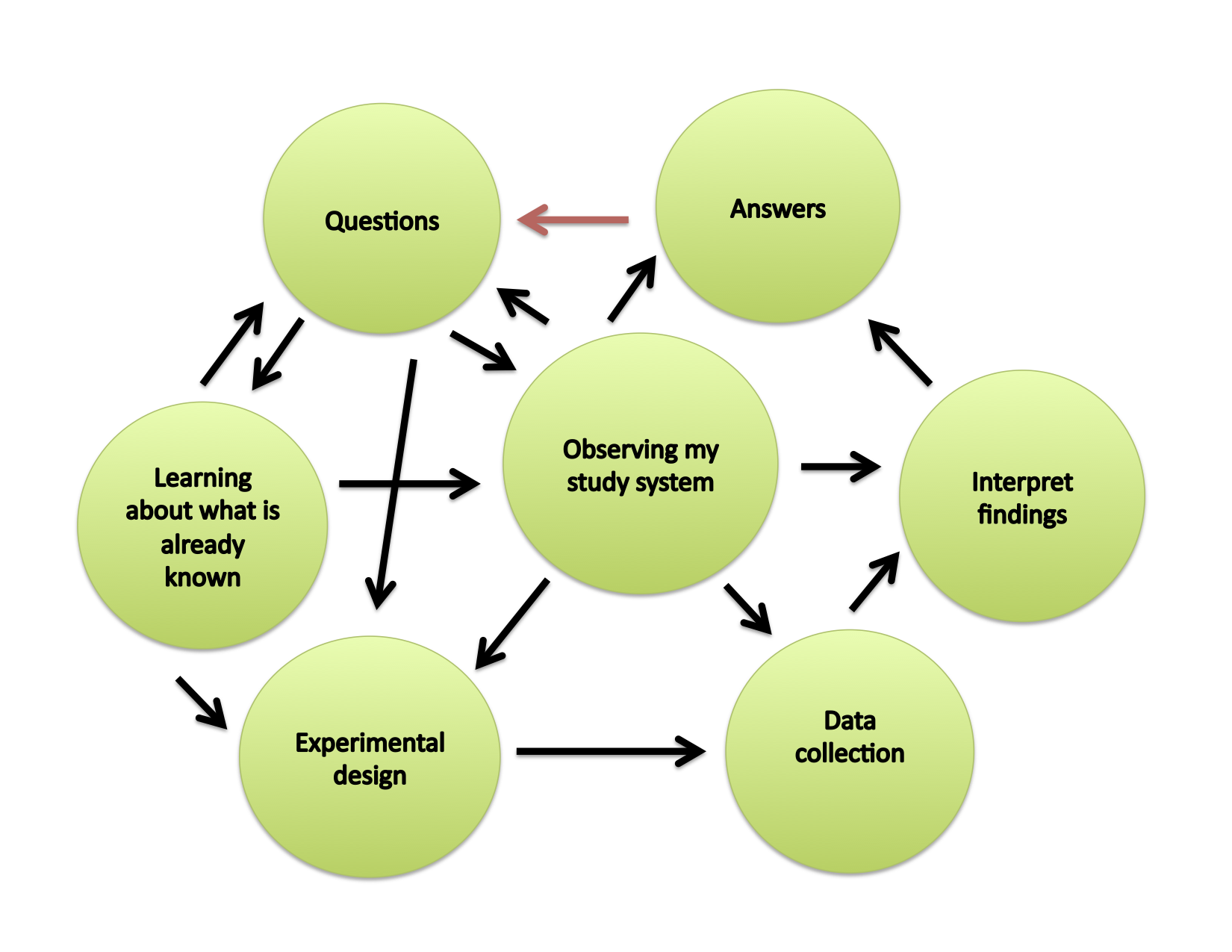

Sometimes I hear questions like, “Why is academic freedom so important? Why should university professors should have total control over what they teach?”

Let me answer those questions with a cautionary tale.

Last semester, a shortage of academic freedom in one department at my university caused what can only be characterized as a tragic boondoggle. This is causing an entire cohort of students to graduate one year late.

Over fifty Biology majors were enrolled in the second semester of General Chemistry. An adjunct lecturer planned and taught this course. The tenure-track faculty in Chemistry implemented their own common internal exam to be administered to all General Chemistry students. The instructor was not privy to the contents of this exam while she was teaching this course. Consequently, over the entire semester, the lectures and homework assignments did not correspond to the material that the students were tested on at the end of the course.

The students, who had been performing well throughout the semester, were blindsided with an exam that looked nothing like they had been studying for the whole semester. This class historically has a pass rate exceeding 80%. Last semester, however, more than 80% of the students failed. The instructor of record for this course, who taught the whole semester, did not apparently have authority over the grading of the exams, nor final authority over the grades that she was directed to submit to the university. This sounds outrageous, but also sounds like the only sensible explanation for what transpired.

Most of these students clearly did not deserve to fail. They did not deserve an exam that did not reflect the content of the course itself. They deserved an instructor who has the authority to control the grades assigned in the course.

The chair of the department is not making any accommodation for the students who got screwed over in her department. The chair claims that the students simply weren’t prepared for the exam. I don’t dispute that fact, but in this circumstance the lack of student preparation is the fault of the Chemistry department, not the students. The students fulfilled the academic expectations of the instructor, but that had no connection to their grade. That is flat-out unethical.

The consequences of this F go well beyond this single course. None of the students can retake the course this semester, because those sections were filled by those who passed preceding course in the sequence.

The soonest these victims can retake the course is one year after they were originally enrolled, but now we have twice as many students trying to take this course and the Chemistry Department refused to offer any additional sections to its victims from last semester.

This course is a prerequisite to Organic Chemistry, which is a prerequisite for other courses. Nearly all of our majors in this section – more than fifty students – are now going to graduate at least one year later than they had planned.

What’s the worst part of all this? It happened two months ago, and as far as I can tell, the only people who aware and troubled are the ones who have no power to change anything.

If any of our students had families donating large sums of money to the school, this situation would have been resolved lickety-split. If anybody with authority in Chemistry actually cared about the students, this would have been fixed before the semester ended. If department had any confidence in their trained contingent faculty, then this unjust situation wouldn’t have emerged.

The students can file a grade grievance, but that won’t fix the problem. It takes at least a year for that process to go through the system. (I served once as a “preliminary investigator” for a grade grievance claim, and the incident happened three semesters earlier.)

You might ask, “Aren’t common exams an effective way to make sure that there is consistency in grading when section are taught by different instructors?” The answer to that question is yes. However, that consistency has a price. In this case, the price is reasonable academic progress for scores of students. Keep in mind that most of our students work long hours in addition to a full class load, and also have substantial family concerns at home. Being in school is a great challenge, and we just made made the climb to graduation even steeper.

The required use of common exams deprives instructors of the academic freedom to evaluate their own students.

If similar events had taken place in any of the three private institutions in which I’ve taught (as adjunct, visiting, and tenure-track), this disgrace would be unthinkable and scandalous. There would be mass protest. But at this disadvantaged university, it’s just one more injustice.

At this point, I’m not even sure if our administrators are aware of this incident. I have a huge amount of confidence in the Dean and the President, who I imagine would do everything they can to resolve this situation, insofar as it is possible. The fact that this problem wasn’t a howling and yelling crisis at their doorstep at the end of last semester is a sad testament to the fact that our students are just accustomed to being disempowered, and they just roll with being wronged. It’s our job, as faculty, to prevent these wrongs from at the outset. That starts with giving all instructors that academic freedom over their own workload.

If any instructor is good enough to be hired as to teach for the university, then they’re good enough to be trusted by the university to carry out their job independently. Any department that lacks the faith that its own instructors can teach appropriately has huge problems that can’t be fixed by imposing a top-down exam.

–

As a postscript, I should note that common exams are not always a disaster, though I think they are inadvisable. In grad school, I used to teach three sections in a class that had more than 40 sections. All of the TAs gave the same exam, and we had little control over this exam. We didn’t even get to see it until a few days before we taught, because it was a practicum set up at the last moment. I see the need for consistency among sections taught by graduate students with little to not teaching experience. I don’t see the need, however, for this particular solution.

How the heck was I supposed to know what to teach when I didn’t know the basis on which students were going to be evaluated? This was obviously a problem for students. (I also lacked the experience and professionalism to deal with this situation effectively.) This was mostly an annoyance, though, and the students did just fine in the end as best as I can recall. The lab was not overly detailed, and the exams weren’t overly idiosyncratic. As a novice instructor, I found the system to be unfair to both myself and the students. If instructors are teaching a course, they should be able to construct or choose their own evaluation. If for some reason that doesn’t happen, at the very least the faculty need to know exactly what is in exams before the start of the semester.