Undergraduate labor powers many university laboratories. Many of us faculty in primarily undergraduate institutions simply would not be shipping much product without this source of labor. And even in PhD-granting institutions, undergrads are often the labor that makes dissertations possible.

Oftentimes, this is unpaid labor. But in the eyes of many, this form of unpaid labor is not uncompensated. You see, the students doing this work are getting “paid” with course credit.

The financial magic of this arrangement, in which faculty wave a curricular wand want to convert graduation requirements into research effort, is deeply embedded among our accepted traditions. It’s the way the world works. Students and faculty just acknowledge that this is the way things have been, and the way they are.

Continue reading

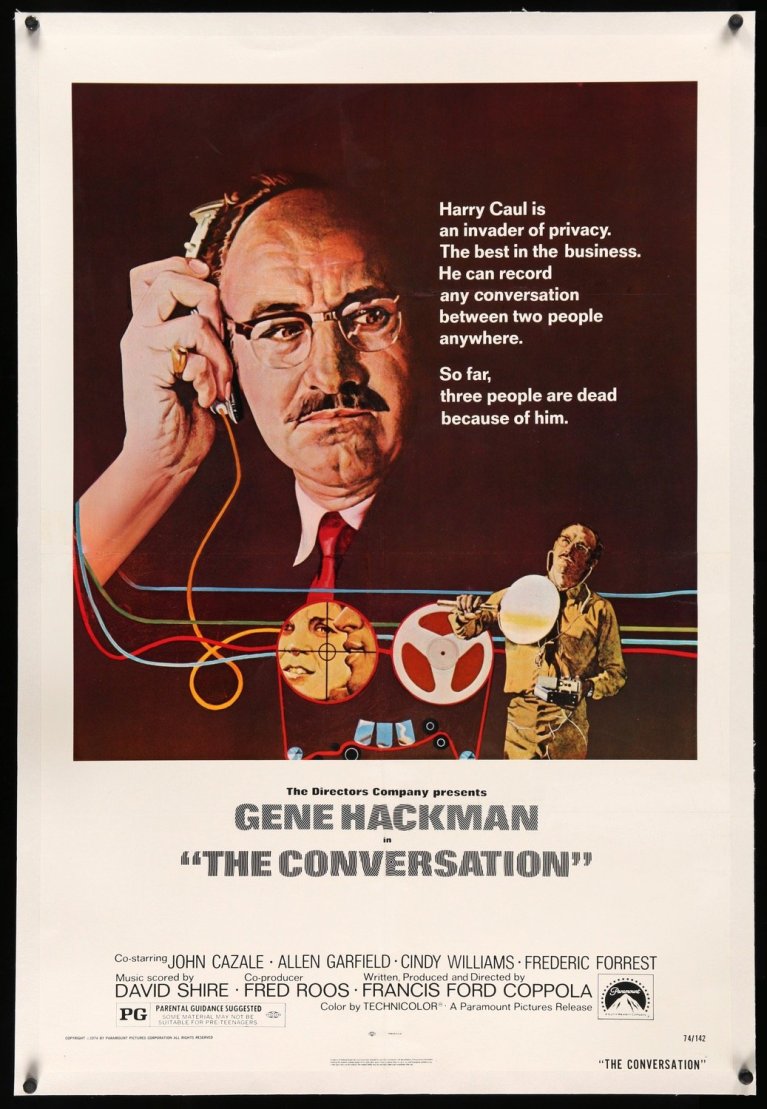

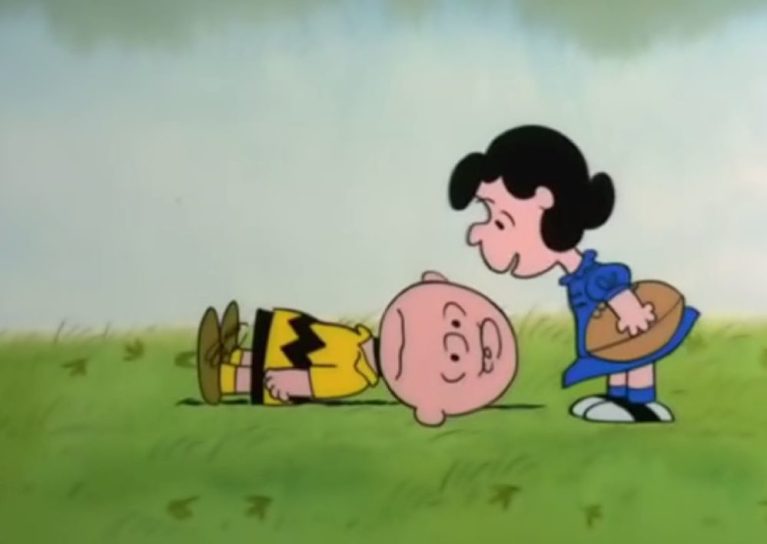

, then you’re right! Your pattern recognition skills are fantastic!

, then you’re right! Your pattern recognition skills are fantastic!