I see this very often in social media, and also in conversation with other academic editors: it’s getting harder and harder to get find people who agree to review manuscripts.

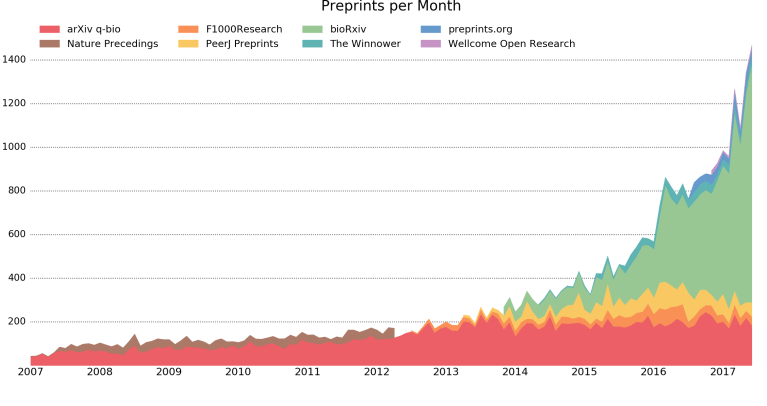

I have no idea whether this reflects the general experience, or if it’s borne out by data. I of course believe the lived experience of my peers, and their accounts make sense given the steady (and absurd) increase in publication rates, with so many people working the manuscript ladder chasing prestige, all compounded by the difficulties of the pandemic. I imagine that some journals have tracked the invitation acceptance rate and how it’s changed over time and perhaps shared this — or maybe it’s in the bibliometric literature — though over the span of a couple minutes my searching powers came up short.

That said, I have to admit that getting reviewers to say yes hasn’t been a problem for me in the course of editorial duties. Even in the depths of this pandemic, I usually haven’t had to ask more than three to five people in order to land two reviewers. Each year, I’ve been handling dozens of manuscripts, so I can’t credibly pin this on the luck of the draw. I don’t know why I don’t have much trouble finding peer reviewers. It presumably is a complex function of the function of manuscripts themselves, the society affiliation of the journal, how and who I choose to invite, the financial model of the journal, maybe if people are more likely to say yes to me as a human being (?), and who knows what else. If you ask people why they say no, I’m sure everybody just thinks it’s because they’re too busy. But if you ask people why they say yes, then that where it might get interesting.

The title of this post is off because I clearly don’t know why I don’t have trouble finding reviewers, but it might be informative because I’ll tell you what I’ve been doing, and that might help y’all come to your own conclusions about the Why. I’ve just stepped down from all of my editorial roles, so I thought now is a good time to step back and reflect on how have I identified potential reviewers, and make an attempt at some generalized take-lessons from this experience.

Continue reading